On AlertWildfire cameras in Smoke Point, you can pick any pixel on a camera image and it will give you the heading. Yesterday’s triangulation error was really bugging me. Was there a problem with the one camera (Stanford Dish), was there a problem with the math in the app, or the data coming from the AlertWildfire servers?

To narrow things down, I decided to spot-check a number of cameras within the AlertWildfire fleet against known landmarks. I picked things like major intersections, buildings, island borders, and so on: things I could easily ground truth by seeing them both on the camera image and on satellite imagery.

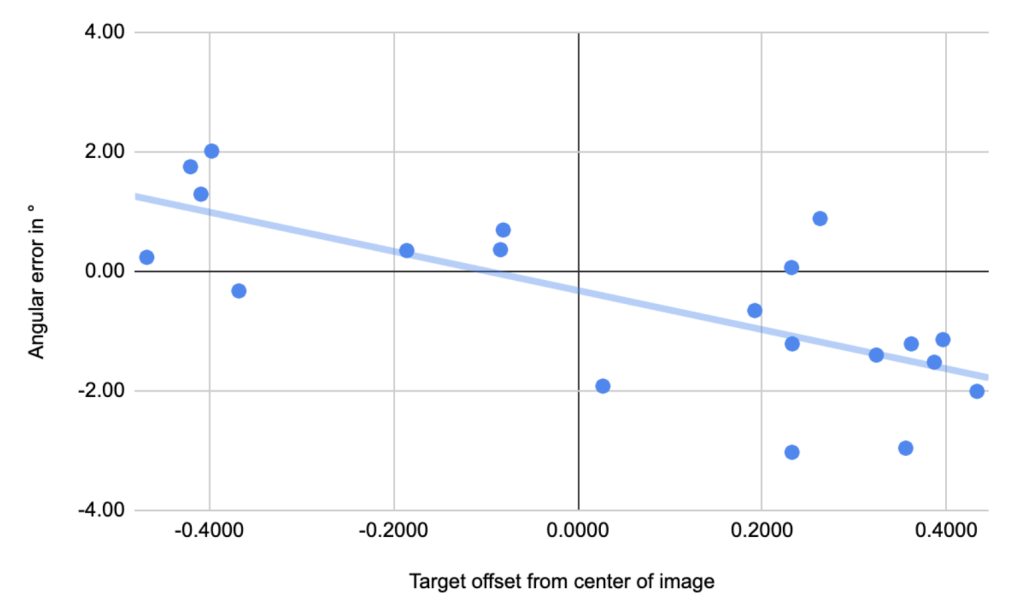

I then measured the “angular error” for each reading: how many degrees off in one direction or another was the line that Smoke Point projected? I wanted to know if there was a correlation between these errors and things, such as:

- Targeting pixels on the left side of the image or the right

- Different cameras (maybe each camera has a unique error)

It’s tedious work and it took me about 3 hours to collect 20 data points across 12 cameras (ugh). However, even with this small amount of data, a trend is emerging:

The mathematics to calculate the heading for a camera image uses data from the AlertWildfire API that estimates the Field of View (FOV) in degrees of the camera image. Let’s say the FOV is 60°, and the camera is pointed due east (90°T). If I were to click on the leftmost pixel of the image, my reading would be 90° – (60°/2) = 60°. Similarly, clicking on the right-most pixel would give a reading of 120°.

It appears that I may need to adjust this value: when selecting the right-most pixel, I may have to add or subtract a proportional offset when the target is off-center. However, I need to discuss this with the Alert Wildfire folks. There are problems with the data that reduce my confidence. First of all, the line fit is OK but not great: there is a trend but there are outliers and I can’t explain them. Correcting the error on some of the cameras only to increase the error on others is pointless.

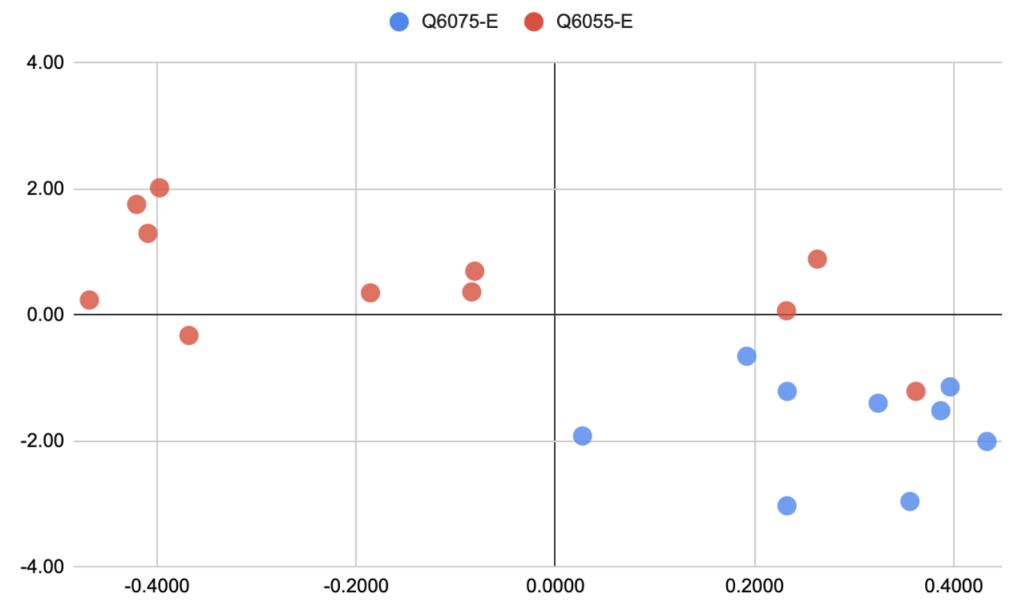

For example, here is the same chart as above but colored by the hardware model of the wildfire camera:

See all the blue dots on the right? This means my data isn’t well distributed: there could be one trend for one camera model, but another different trend for the other. I can’t know without collecting a lot more data, and it’s tedious to collect.

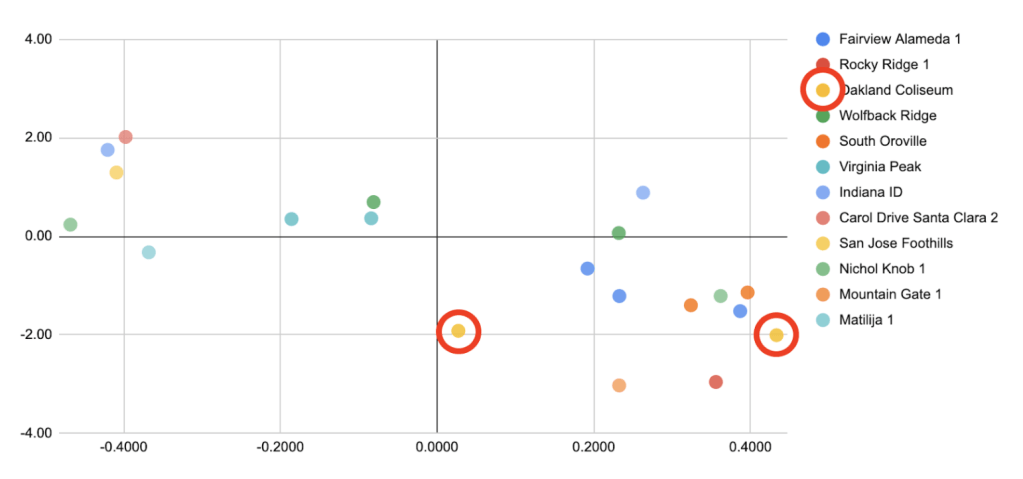

Here is the same chart but colored by the source camera:

In red I have highlighted the data-points from one outlier camera that does not exhibit the trend (Oakland Coliseum). In fact, from this limited data it appears that Oakland Coliseum has a fixed -2° error. Does it really, though? A more thorough analysis would be needed.

Update May 18: after discussing these findings with the experts, I have learned there are several sources of error that contribute to problems like we’re observing above. Every camera is mounted somewhere, and that mounting job doesn’t always start perfect, nor does it stay perfect. The camera can:

- Be out-of-adjustment from left to right, meaning it will have a fixed offset error.

- Be tilted, meaning that it will have error that changes depending on where it is pointed, and where on the image you have chosen a pixel.

One thought on “Analyzing wildfire camera error”